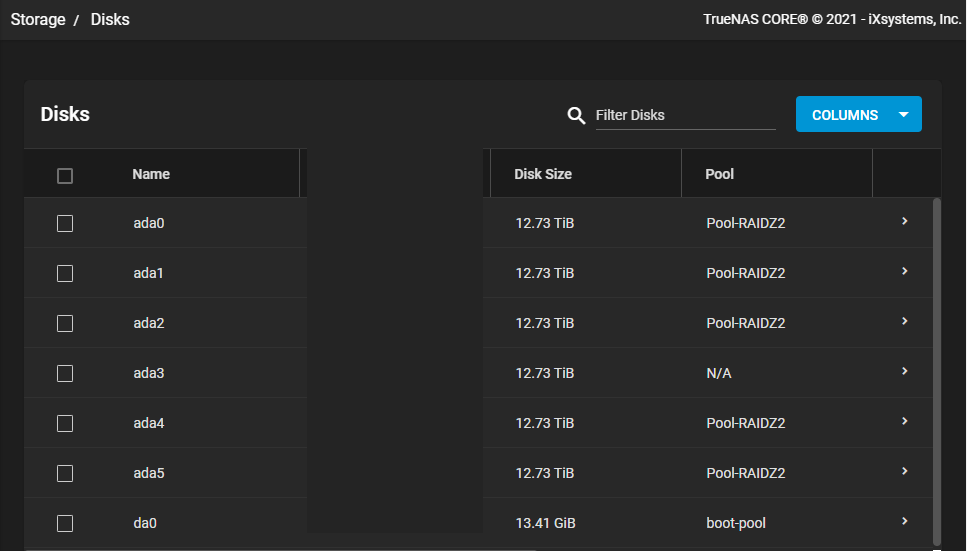

I am currently running TrueNAS-12.0-U7 and am running out of disk space. Instead of deleting files, it seemed easier for me just to buy another drive and expand pool size. I came from a QNAP background where this was fairly easy to achieve, but not sure on TrueNAS. I had 5 x 14TB drives installed, creating a 35.28 TiB ZRAID2 array, allowing for 2 disk failures. I installed another identical 14TB drive and can't seem to find any how-to's or options to simply add this to the pool in order to expand this current pool size. Any advice or direction? I hope I've provided enough detail to get started with my first post to this forum. Thank you in advance...

-

Important Announcement for the TrueNAS Community.

The TrueNAS Community has now been moved. This forum has become READ-ONLY for historical purposes. Please feel free to join us on the new TrueNAS Community Forums

How to expand pool size after I added another disk

- Thread starter rgorbie

- Start date