hungarianhc

Patron

- Joined

- Mar 11, 2014

- Messages

- 234

TL;DR: My volume is alive. I can access the files via SSH. It is, however, in a degraded state, and I'm currently running "naked," two drives down in my Raid-Z2 pool. I need to fix this...

1) A week ago, I noticed that 5 drive Raid-Z2 pool had a disk throwing all sorts of errors. I ordered a new 4TB drive to replace it.

2) I shut down the system, replaced the drive, and rebooted. I did NOT offline the drive first. Yup. Mistake.

3) Now this is the part that is odd. It actually showed the new drive as part of the pool, but it said it was unavailable. I know this may sound implausible, and maybe I'm misrepresenting information, but we're past this point, and I can't replicate this now, due to following steps.

4) I thought this was odd. I shut the system down. Plugged the old, bad drive in. Rebooted.

5) Now when I went to the replace drive option in the UI, it showed the old dead drive as the one available as a replacement drive. Weird. My logic at this point was that something on the pool was messed up, I'd re-add the "bad" drive, and then re-go through the proper steps to replace the drive.

6) I used the "replace" option to bring the bad drive back into the pool. Had to use the "force" option as it obviously saw some traces of a ZFS pool already on the failing drive.

7) Okay so now it's reslivering on the failing drive. This will likely take a LONG time, if it ever even finishes successfully.

8) Meanwhile, I do a "sanity check" (which, I know, will sound more like insane behavior, and I agree in hindsight) and remove the drive that is reslivering. My logic is that if I reboot the machine, and it shows that the reslivering drive is now unavailable, I can reattach the new drive, initiate "replace" on it, and then I'll be good to go.

9) Here's another bonehead move. I pulled out the WRONG drive. SHIT. Yup. Reboot FreeNAS. The drive that I pulled out is now showing as unavailable in the pool.

Okay so here's where I'm currently at... I've got a dying drive that is in the process of re-slivering. It's at 0.38% right now. Not sure when it will finish or if it ever will. Meanwhile, a healthy drive is showing up as unavailable in the pool, even though it is plugged in. My new drive is not even plugged into the box. I know there were bonehead moves made all along the way here so go easy on me. I'd love to see how I can move forward from here.

Here is the current zpool status:

So.... What do I do?

My gut is to stop the resliver, get rid of the dying drive, somehow reassociate the unavailable drive with the pool, and then add my replacement disk to replace the dying drive. Does that make sense? Thoughts? THANKS!!!!

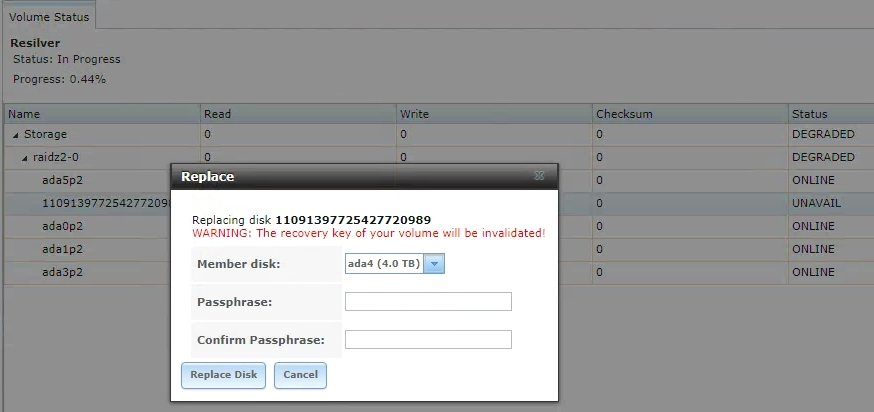

Edit: I took a look in the UI, and the drive that is "unavailable" shows as a replacement drive. See below.

I didn't click "Replace Disk" but it seems to me that if I do that, it will probably give me an error and tell me that there is already a ZFS volume on it, and then it will give me the option to force it. If I force it, it will then re-join the pool, I assume. It will then start reslivering... Assuming nothing dies between now and when it finishes reslivering, then I'll be back to my state of a non-degraded pool with a dying drive, ada0p2 (currently reslivering). Then I can go and replace it with the extra drive. Thoughts on this plan?

1) A week ago, I noticed that 5 drive Raid-Z2 pool had a disk throwing all sorts of errors. I ordered a new 4TB drive to replace it.

2) I shut down the system, replaced the drive, and rebooted. I did NOT offline the drive first. Yup. Mistake.

3) Now this is the part that is odd. It actually showed the new drive as part of the pool, but it said it was unavailable. I know this may sound implausible, and maybe I'm misrepresenting information, but we're past this point, and I can't replicate this now, due to following steps.

4) I thought this was odd. I shut the system down. Plugged the old, bad drive in. Rebooted.

5) Now when I went to the replace drive option in the UI, it showed the old dead drive as the one available as a replacement drive. Weird. My logic at this point was that something on the pool was messed up, I'd re-add the "bad" drive, and then re-go through the proper steps to replace the drive.

6) I used the "replace" option to bring the bad drive back into the pool. Had to use the "force" option as it obviously saw some traces of a ZFS pool already on the failing drive.

7) Okay so now it's reslivering on the failing drive. This will likely take a LONG time, if it ever even finishes successfully.

8) Meanwhile, I do a "sanity check" (which, I know, will sound more like insane behavior, and I agree in hindsight) and remove the drive that is reslivering. My logic is that if I reboot the machine, and it shows that the reslivering drive is now unavailable, I can reattach the new drive, initiate "replace" on it, and then I'll be good to go.

9) Here's another bonehead move. I pulled out the WRONG drive. SHIT. Yup. Reboot FreeNAS. The drive that I pulled out is now showing as unavailable in the pool.

Okay so here's where I'm currently at... I've got a dying drive that is in the process of re-slivering. It's at 0.38% right now. Not sure when it will finish or if it ever will. Meanwhile, a healthy drive is showing up as unavailable in the pool, even though it is plugged in. My new drive is not even plugged into the box. I know there were bonehead moves made all along the way here so go easy on me. I'd love to see how I can move forward from here.

Here is the current zpool status:

Code:

pool: Storage state: DEGRADED status: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state. action: Wait for the resilver to complete. scan: resilver in progress since Wed Oct 3 22:37:08 2018 2.57T scanned at 202M/s, 42.4G issued at 3.26M/s, 10.8T total 8.34G resilvered, 0.38% done, no estimated completion time config: NAME STATE READ WRITE CKSUM Storage DEGRADED 0 0 0 raidz2-0 DEGRADED 0 0 0 gptid/5729e8e1-b247-11e3-82da-d050990a6791.eli ONLINE 0 0 0 gptid/5796718e-b247-11e3-82da-d050990a6791.eli ONLINE 0 0 0 gptid/4e6e50a9-c797-11e8-91c8-e0d55ecf5abd.eli ONLINE 0 0 0 11091397725427720989 UNAVAIL 0 0 0 was /dev/gptid/58e962e4-b247-11e3-82da-d050990a6791.eli gptid/594a667f-b247-11e3-82da-d050990a6791.eli ONLINE 0 0 0 errors: No known data errors

So.... What do I do?

My gut is to stop the resliver, get rid of the dying drive, somehow reassociate the unavailable drive with the pool, and then add my replacement disk to replace the dying drive. Does that make sense? Thoughts? THANKS!!!!

Edit: I took a look in the UI, and the drive that is "unavailable" shows as a replacement drive. See below.

I didn't click "Replace Disk" but it seems to me that if I do that, it will probably give me an error and tell me that there is already a ZFS volume on it, and then it will give me the option to force it. If I force it, it will then re-join the pool, I assume. It will then start reslivering... Assuming nothing dies between now and when it finishes reslivering, then I'll be back to my state of a non-degraded pool with a dying drive, ada0p2 (currently reslivering). Then I can go and replace it with the extra drive. Thoughts on this plan?

Last edited: