Hello,

I am fairly new to Freenas.

Some weeks ago i created a new pool and i started with a single mirror. A few days ago i added a single disk to that pool by accident. Yesterday i removed that single disk from that pool via the zpool remove poolname vdev command.

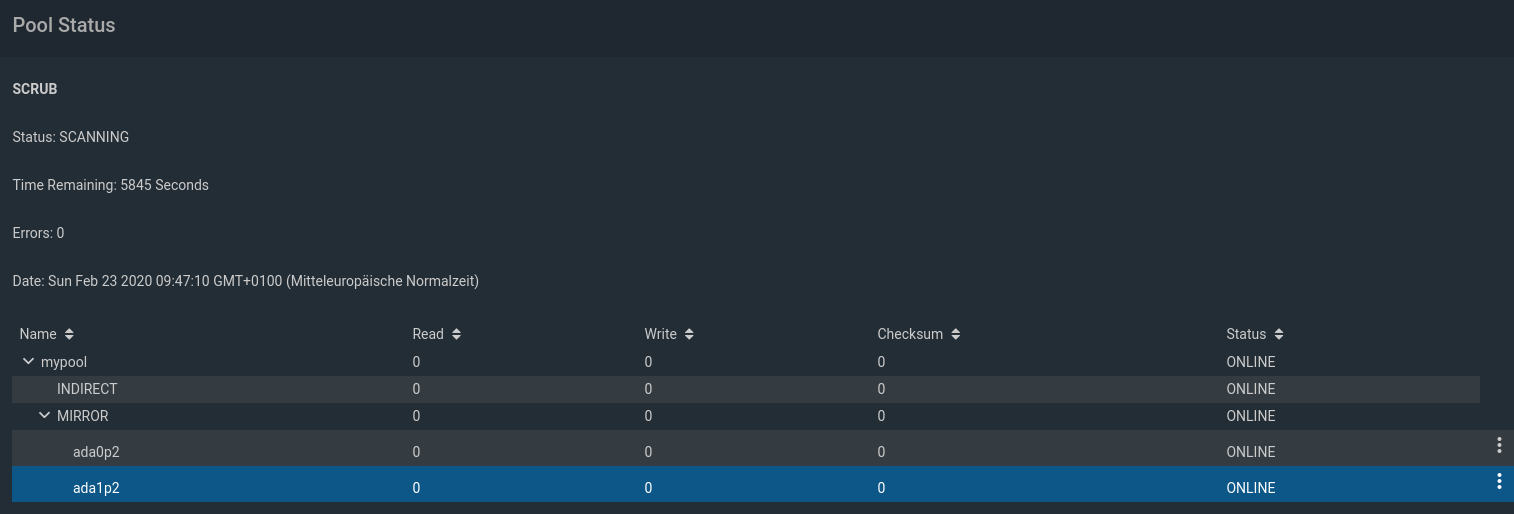

output of zpool status

pool: mypool

state: ONLINE

remove: Removal of vdev 0 copied 1.78T in 5h53m, completed on Sat Feb 22 23:10:53 2020

45.8M memory used for removed device mappings

config:

NAME STATE READ WRITE CKSUM

mypool ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

gptid/8b511c10-19df-11ea-986b-ac1f6bf61788 ONLINE 0 0 0

gptid/78a51654-19e3-11ea-986b-ac1f6bf61788 ONLINE 0 0 0

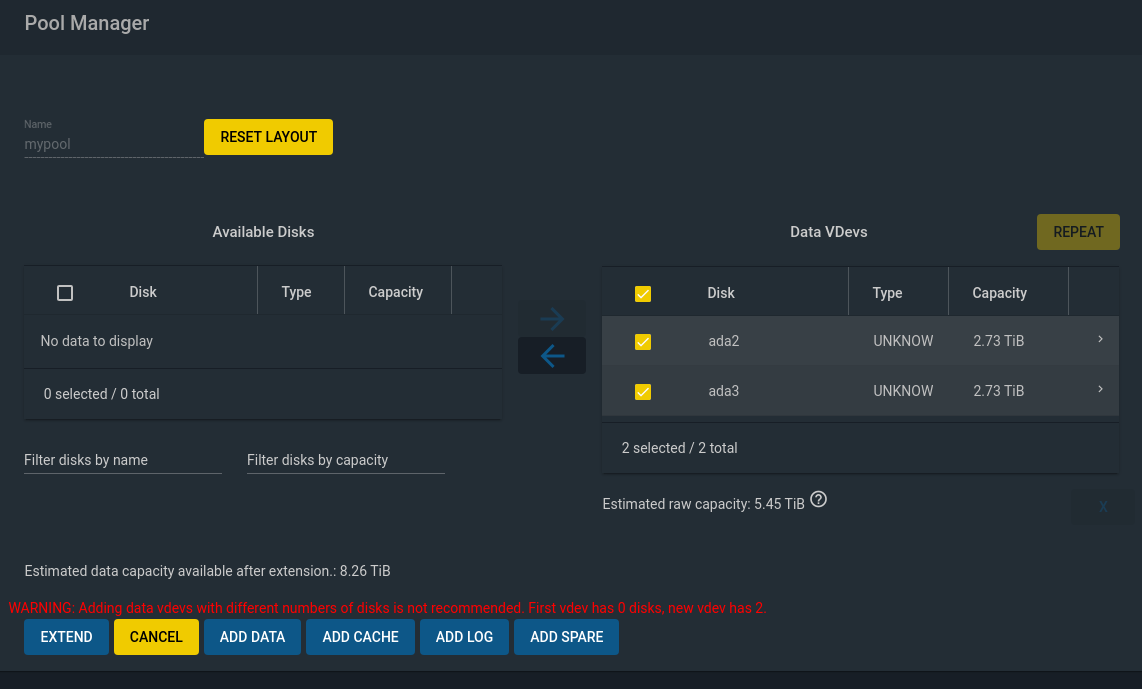

So after that removal process finished, i tried to add a new vdev to that pool via the gui but i am getting this message

After a bit of googling and trying to figure out how this whole removal process works i found out that the removed device gets replaced by a indirect vdev and that there is a mapping table (zpool status shows aswell) that is pointing to the new location on the remaining disks. I guess this indirect vdev is the one that is quoted to have 0 disks in that red message.

is there anything i can do to get rid of this indirect vdev? Or maybe thats just a bug in the gui since the removal of top level vdevs is a fairly new feature?

I am fairly new to Freenas.

Some weeks ago i created a new pool and i started with a single mirror. A few days ago i added a single disk to that pool by accident. Yesterday i removed that single disk from that pool via the zpool remove poolname vdev command.

output of zpool status

pool: mypool

state: ONLINE

remove: Removal of vdev 0 copied 1.78T in 5h53m, completed on Sat Feb 22 23:10:53 2020

45.8M memory used for removed device mappings

config:

NAME STATE READ WRITE CKSUM

mypool ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

gptid/8b511c10-19df-11ea-986b-ac1f6bf61788 ONLINE 0 0 0

gptid/78a51654-19e3-11ea-986b-ac1f6bf61788 ONLINE 0 0 0

So after that removal process finished, i tried to add a new vdev to that pool via the gui but i am getting this message

After a bit of googling and trying to figure out how this whole removal process works i found out that the removed device gets replaced by a indirect vdev and that there is a mapping table (zpool status shows aswell) that is pointing to the new location on the remaining disks. I guess this indirect vdev is the one that is quoted to have 0 disks in that red message.

is there anything i can do to get rid of this indirect vdev? Or maybe thats just a bug in the gui since the removal of top level vdevs is a fairly new feature?