Hello,

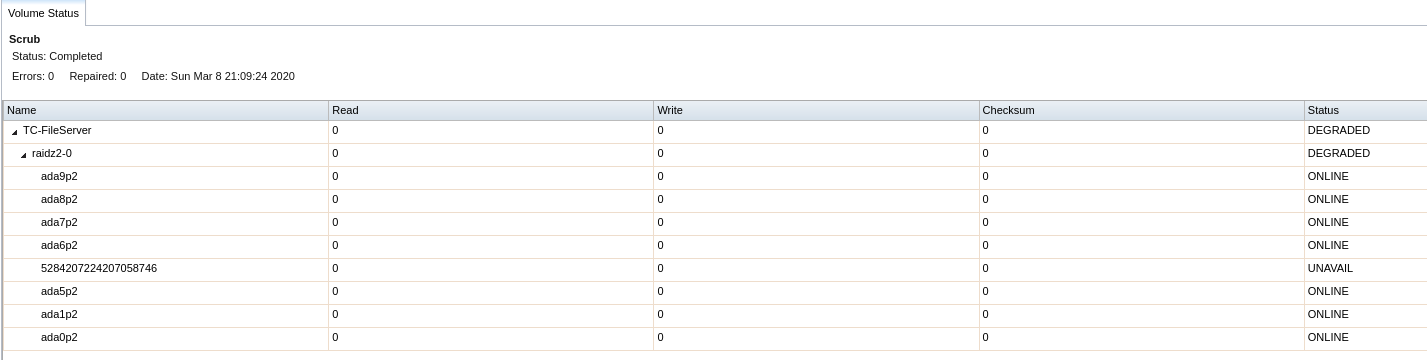

A NAS within the company has a failed drive. Ive reviewed documentation on replacement and it certainly seems easy enough. The issue I have is that the zpool is sort of an odd configuration that I would not expect and im not sure which path I should take on replacement.

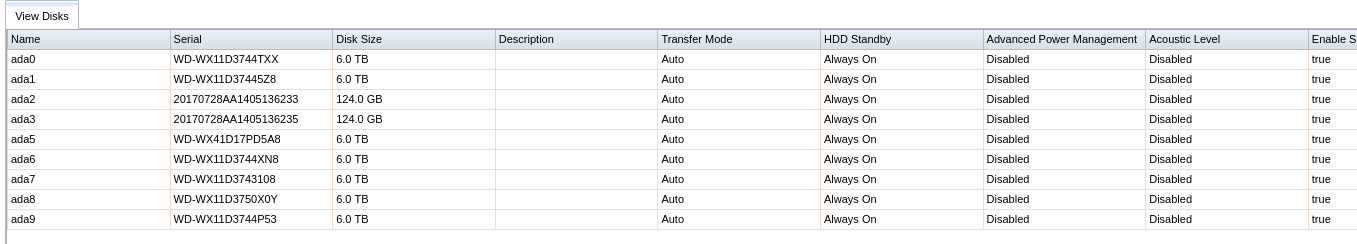

My question is, why would somebody build an array with seven 6tb hdds and one 124gb ssd? Is the ssd performing some specific function that is not obvious to me?

Does this not affect the redundancy of the array?

One of the two 124gb ssds is the drive that failed. Its seems the other is just sitting there as a warm spare perhaps? I could use that (theoretically) good drive for replacement but I don't see an obvious way to tell which of the two ssds is the fail(ed/ing) drive.

Is the best course of action to replace the ssd with an equivalent 6tb hdd? Seems like the answer is probably yes.

Thanks for any input you can lend.

Didnt realize the drive was not listing in any of these views due to the failure. I was able to purchase an identical replacement.

A NAS within the company has a failed drive. Ive reviewed documentation on replacement and it certainly seems easy enough. The issue I have is that the zpool is sort of an odd configuration that I would not expect and im not sure which path I should take on replacement.

Code:

redacted@redacted:~ # zpool status

pool: redacted

state: DEGRADED

status: One or more devices could not be opened. Sufficient replicas exist for

the pool to continue functioning in a degraded state.

action: Attach the missing device and online it using 'zpool online'.

see: http://illumos.org/msg/ZFS-8000-2Q

scan: scrub repaired 0 in 20h8m with 0 errors on Sun Mar 8 21:09:24 2020

config:

NAME STATE READ WRITE CKSUM

redacted DEGRADED 0 0 0

raidz2-0 DEGRADED 0 0 0

gptid/47537639-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

gptid/48188022-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

gptid/48e5de2a-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

5284207224207058746 UNAVAIL 0 0 0 was /dev/gptid/49b35b9b-e686-11e7-8f40-d05099c376ba

gptid/4a80c22f-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

gptid/4b53736b-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

gptid/4c265376-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

gptid/4cf57b2d-e686-11e7-8f40-d05099c376ba ONLINE 0 0 0

errors: No known data errors

pool: freenas-boot

state: ONLINE

scan: scrub repaired 0 in 0h0m with 0 errors on Mon Mar 23 03:45:27 2020

config:

NAME STATE READ WRITE CKSUM

freenas-boot ONLINE 0 0 0

ada4p2 ONLINE 0 0 0

errors: No known data errors

Code:

redacted@redacted:~ # camcontrol devlist <WDC WD60EFRX-68L0BN1 82.00A82> at scbus0 target 0 lun 0 (pass0,ada0) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus1 target 0 lun 0 (pass1,ada1) <2.5" SATA SSD 3MG2-P M150821> at scbus2 target 0 lun 0 (pass2,ada2) <2.5" SATA SSD 3MG2-P M150821> at scbus3 target 0 lun 0 (pass3,ada3) <16GB SATA Flash Drive SFDK002A> at scbus4 target 0 lun 0 (pass4,ada4) <Marvell Console 1.01> at scbus9 target 0 lun 0 (pass5) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus10 target 0 lun 0 (pass6,ada5) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus12 target 0 lun 0 (pass7,ada6) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus13 target 0 lun 0 (pass8,ada7) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus14 target 0 lun 0 (pass9,ada8) <WDC WD60EFRX-68L0BN1 82.00A82> at scbus15 target 0 lun 0 (pass10,ada9)

My question is, why would somebody build an array with seven 6tb hdds and one 124gb ssd? Is the ssd performing some specific function that is not obvious to me?

Does this not affect the redundancy of the array?

One of the two 124gb ssds is the drive that failed. Its seems the other is just sitting there as a warm spare perhaps? I could use that (theoretically) good drive for replacement but I don't see an obvious way to tell which of the two ssds is the fail(ed/ing) drive.

Is the best course of action to replace the ssd with an equivalent 6tb hdd? Seems like the answer is probably yes.

Thanks for any input you can lend.

Didnt realize the drive was not listing in any of these views due to the failure. I was able to purchase an identical replacement.

Last edited: