- Joined

- May 13, 2015

- Messages

- 2,478

TL;DR: my testing shows it is not safe to use an MTU of 9000 with the VMXNET3 network driver on a FreeNAS 9.3 VM running on VMware ESXi 6. The same configuration works very well with the default MTU value.

I've been testing FreeNAS over the last month and a half on the following hardware:

I configured a separate data network using Ben Bryan's excellent guide here:

https://b3n.org/freenas-9-3-on-vmware-esxi-6-0-guide/

Part of this setup included using an MTU of 9000 on the VMware virtual switch as well as the attached FreeNAS VMXNET3-based NIC. As we will see, this turns out to be a problem.

I configured two Windows VMs (one Windows 7 w/ 1 vCPU + 2GB RAM, the other Windows 2012R2 w/ 1vCPU + 1GB RAM) and installed the CrystalDiskMark and ATTO disk benchmarks on both.

I used these VMs to test FreeNAS as a VMware datastore. I tried both NFS and iSCSI; with and without a SLOG and/or L2ARC SSD device; with and without 'sync' enabled, etc.

What I found is that, in all cases, the FreeNAS-based datastore would break down under a heavy load, in this case, when simultaneously running the ATTO benchmark on both Windows VMs. The VMs would appear to freeze and the vSphere client would display the datastore using the italicized typeface indicating it was offline. Usually the datastore would eventually 'wake up'; the VMs would become responsive; and the benchmarks would finish, though with abysmal performance.

On the FreeNAS system log I would see this sequence of messages for iSCSI-based datastores:

There is an unresolved Bug report about this type of problem here:

https://bugs.pcbsd.org/issues/7622

NFS-based datastores behaved exactly the same way, though without any log entries.

Note that, when run singly, the Windows VM benchmarks indicated absolutely stellar performance - I/O rates over 1000MB/s. I wanted this kind of performance for my VMs! But reliability comes before performance in my book, and these VMXNET3-based datastores simply weren't reliable. So I kept testing...

I tried re-compiling the VMXNET3 driver, per David E's excellent instructions detailed here:

https://forums.freenas.org/index.php?threads/vmxnet3-ko-for-freenas-9-x-in-esxi-5-5.18280/

But all to no avail.

When I switched over to the E1000 driver, everything worked fine. No failures, but with reduced performance - especially write performance.

Finally, I tried reconfiguring the system with the VMXNET3 driver but with the standard MTU value. Voila! No datastore failures!

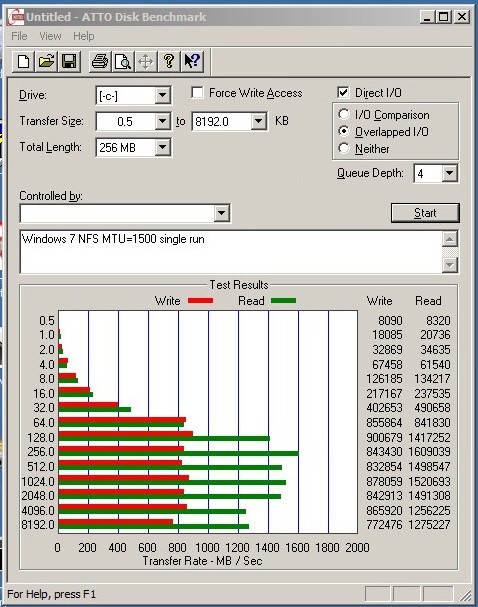

Here are ATTO benchmark results from a single run. Pretty nice I/O rates for spinning rust!

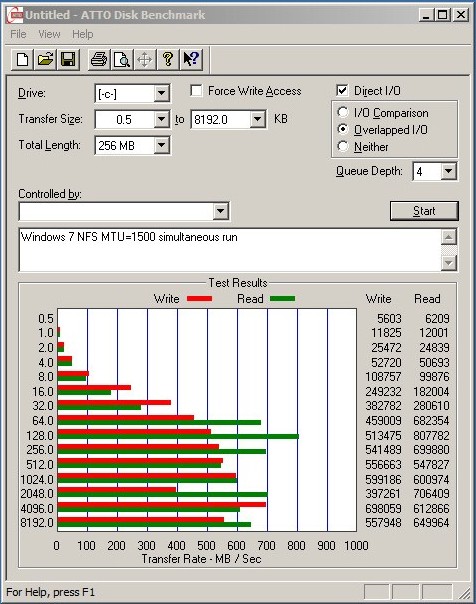

Here is the same benchmark, run simultaneously with another VM. There is a clear reduction in speed, but performance is still quite acceptable:

I've been testing FreeNAS over the last month and a half on the following hardware:

- Supermicro X10SL7-F, Intel Xeon ES-1241v3 @3.5GHz, 32GB Crucial ECC RAM

- Motherboard LSI 2308 controller passed through to FreeNAS VM via VT-d per best practices

- Disk configuration: 7 x 2TB HGST 7K4000 drives set up as a single RAIDZ2 vdev for a 10TB pool

- VMware ESXi v6.0.0 (build 2715440) booting from a USB stick

- FreeNAS FreeNAS-9.3-STABLE-201506162331 installed with the mirroring option on a pair of SSD datastores

- FreeNAS configured with 2 vCPU and 16GB RAM

I configured a separate data network using Ben Bryan's excellent guide here:

https://b3n.org/freenas-9-3-on-vmware-esxi-6-0-guide/

Part of this setup included using an MTU of 9000 on the VMware virtual switch as well as the attached FreeNAS VMXNET3-based NIC. As we will see, this turns out to be a problem.

I configured two Windows VMs (one Windows 7 w/ 1 vCPU + 2GB RAM, the other Windows 2012R2 w/ 1vCPU + 1GB RAM) and installed the CrystalDiskMark and ATTO disk benchmarks on both.

I used these VMs to test FreeNAS as a VMware datastore. I tried both NFS and iSCSI; with and without a SLOG and/or L2ARC SSD device; with and without 'sync' enabled, etc.

What I found is that, in all cases, the FreeNAS-based datastore would break down under a heavy load, in this case, when simultaneously running the ATTO benchmark on both Windows VMs. The VMs would appear to freeze and the vSphere client would display the datastore using the italicized typeface indicating it was offline. Usually the datastore would eventually 'wake up'; the VMs would become responsive; and the benchmarks would finish, though with abysmal performance.

On the FreeNAS system log I would see this sequence of messages for iSCSI-based datastores:

Code:

WARNING: 10.0.58.1 (iqn.1998-01.com.vmware:554fa508-b47d-0416-fffc-0cc47a3419a2-2889c35f): no ping reply (NOP-Out) after 5 seconds; dropping connection WARNING: 10.0.58.1 (iqn.1998-01.com.vmware:554fa508-b47d-0416-fffc-0cc47a3419a2-2889c35f): connection error; dropping connection

There is an unresolved Bug report about this type of problem here:

https://bugs.pcbsd.org/issues/7622

NFS-based datastores behaved exactly the same way, though without any log entries.

Note that, when run singly, the Windows VM benchmarks indicated absolutely stellar performance - I/O rates over 1000MB/s. I wanted this kind of performance for my VMs! But reliability comes before performance in my book, and these VMXNET3-based datastores simply weren't reliable. So I kept testing...

I tried re-compiling the VMXNET3 driver, per David E's excellent instructions detailed here:

https://forums.freenas.org/index.php?threads/vmxnet3-ko-for-freenas-9-x-in-esxi-5-5.18280/

But all to no avail.

When I switched over to the E1000 driver, everything worked fine. No failures, but with reduced performance - especially write performance.

Finally, I tried reconfiguring the system with the VMXNET3 driver but with the standard MTU value. Voila! No datastore failures!

Here are ATTO benchmark results from a single run. Pretty nice I/O rates for spinning rust!

Here is the same benchmark, run simultaneously with another VM. There is a clear reduction in speed, but performance is still quite acceptable: