hugofromboss

Cadet

- Joined

- Sep 5, 2022

- Messages

- 4

Hello,

I am new to TrueNAS. However, I've read and search through the forums for support on this and I'll cover everything I've tried including my current configuration.

Problem:

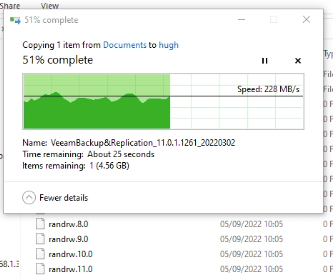

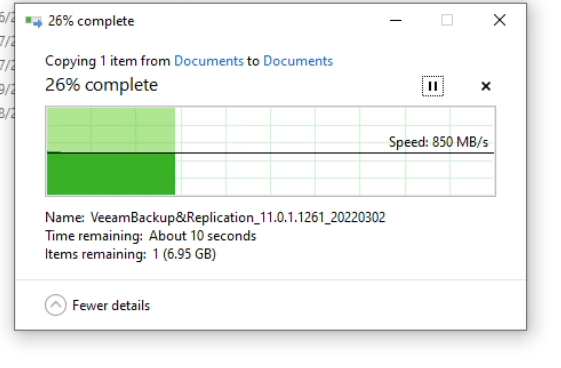

I can achieve local disk performance of 600-700MB/s when running dd or fio. However, over the network SMB on Windows achieves about 200MB/s and SMB on Linux achieves about 150MB/s. When performing a SMB copy on Windows I get the expected 700MB/s, going as high as 1.2GB/s. I get similarly poor speeds on Linux for NFS and ISCSI. I am expecting to have close or similar performance of 600-700MB/s over the network. The test is a single large 10GB file.

Hardware:

I'm running TrueNAS-13.0-U2 on a DELL r630 bare metal (64GB RAM E5-2640 v3). With a 40G Mellanox ConnectX-3 Pro. Dell SAS LSI 6g card connected to a DELL SC280. BIOS/IDRAC/HDDs/NIC/SAS controller everything has been updated and is at the latest revision. The Dell life cycle controller also confirms no underlying hardware issues.

ZFS Pool:

I have 3 vdevs each with 7 4TB disks in zRaidz2. I also have 3 (Samsung 970 250GB) cache SSD drives.

This is a newly created pool with < 5% usage. As I'm testing TrueNAS.

Zpool is xattr=sa,compression=off,encryption=off,dedup=off,atime=off,checksum=on

What I've already tried:

Iperf3 reports a healthy sec 1.04 GBytes 8.93 Gbits/sec. (The Windows / Linux clients have a 10G nic)

MTU is 9000 on the server, client and network.

NFS server and client are connected via a single 40G switch.

Auto tune is enabled though had little effect.

SMB has the following Auxiliary parameters:

netstat -s shows healthy network performance (no frames lost etc)

Linux mount parameters are:

Test results:

Iperf3:

FIO:

Command:

Results:

DD (cd /mnt/pool/test && dd if=/dev/zero of=file bs=1048576):

Windows file transfer:

To show the copy isn't limited by the reads on the client machine:

Windows Local File copy:

Thank you in advance for any help you're able to provide.

Thanks

H

I am new to TrueNAS. However, I've read and search through the forums for support on this and I'll cover everything I've tried including my current configuration.

Problem:

I can achieve local disk performance of 600-700MB/s when running dd or fio. However, over the network SMB on Windows achieves about 200MB/s and SMB on Linux achieves about 150MB/s. When performing a SMB copy on Windows I get the expected 700MB/s, going as high as 1.2GB/s. I get similarly poor speeds on Linux for NFS and ISCSI. I am expecting to have close or similar performance of 600-700MB/s over the network. The test is a single large 10GB file.

Hardware:

I'm running TrueNAS-13.0-U2 on a DELL r630 bare metal (64GB RAM E5-2640 v3). With a 40G Mellanox ConnectX-3 Pro. Dell SAS LSI 6g card connected to a DELL SC280. BIOS/IDRAC/HDDs/NIC/SAS controller everything has been updated and is at the latest revision. The Dell life cycle controller also confirms no underlying hardware issues.

ZFS Pool:

I have 3 vdevs each with 7 4TB disks in zRaidz2. I also have 3 (Samsung 970 250GB) cache SSD drives.

This is a newly created pool with < 5% usage. As I'm testing TrueNAS.

Zpool is xattr=sa,compression=off,encryption=off,dedup=off,atime=off,checksum=on

What I've already tried:

Iperf3 reports a healthy sec 1.04 GBytes 8.93 Gbits/sec. (The Windows / Linux clients have a 10G nic)

MTU is 9000 on the server, client and network.

NFS server and client are connected via a single 40G switch.

Auto tune is enabled though had little effect.

SMB has the following Auxiliary parameters:

Disks are new and report 0 issues. SMART reports no issues.server multi channel support = yes

interfaces = "192.168.1.3;capability=RSS,speed=10000000000"

netstat -s shows healthy network performance (no frames lost etc)

Linux mount parameters are:

Windows Get-SMBMultiChannelConnection:sudo mount -o username=hugo,domain=corp,uid=1000,gid=1000,vers=3.0 -t cifs '\\192.168.1.3\test' /mnt/test

Server Name Selected Client IP Server IP Client Interface Index Server Interface Index Client RSS Capable Client

RDMA

Capable

----------- -------- --------- --------- ---------------------- ---------------------- ------------------ -------

192.168.1.3 True 192.168.1.112 192.168.1.3 22 8 True False

Test results:

Iperf3:

[SUM] 0.00-8.42 sec 9.43 GBytes 9.62 Gbits/sec sender

[SUM] 0.00-8.42 sec 0.00 Bytes 0.00 bits/sec receiver

FIO:

Command:

fio --name=randrw \

--bs=128k \

--direct=1 \

--directory=/mnt/pool/test \

--ioengine=posixaio \

--iodepth=32 \

--group_reporting \

--numjobs=12 \

--ramp_time=10 \

--runtime=60 \

--rw=randrw \

--size=256MGB \

--time_based

Results:

Jobs: 12 (f=12): [m(12)][100.0%][r=1242MiB/s,w=1238MiB/s][r=9932,w=9902 IOPS][eta 00m:00s]

randrw: (groupid=0, jobs=12): err= 0: pid=5236: Mon Sep 5 10:05:43 2022

read: IOPS=12.0k, BW=1506MiB/s (1579MB/s)(88.2GiB/60015msec)

slat (nsec): min=1170, max=1679.7k, avg=2150.70, stdev=5738.54

clat (usec): min=64, max=86811, avg=10654.82, stdev=3758.73

lat (usec): min=126, max=86814, avg=10656.97, stdev=3758.69

clat percentiles (usec):

| 1.00th=[ 5604], 5.00th=[ 6325], 10.00th=[ 6718], 20.00th=[ 7373],

| 30.00th=[ 8029], 40.00th=[ 8848], 50.00th=[10028], 60.00th=[11338],

| 70.00th=[12911], 80.00th=[13960], 90.00th=[15008], 95.00th=[16188],

| 99.00th=[20841], 99.50th=[22938], 99.90th=[39060], 99.95th=[48497],

| 99.99th=[73925]

bw ( MiB/s): min= 911, max= 2477, per=99.92%, avg=1504.53, stdev=34.05, samples=1428

iops : min= 7287, max=19814, avg=12032.63, stdev=272.40, samples=1428

write: IOPS=12.0k, BW=1506MiB/s (1579MB/s)(88.3GiB/60015msec); 0 zone resets

slat (usec): min=3, max=8439, avg= 7.53, stdev=16.22

clat (usec): min=376, max=99270, avg=21172.64, stdev=6694.30

lat (usec): min=527, max=99278, avg=21180.17, stdev=6694.27

clat percentiles (usec):

| 1.00th=[11994], 5.00th=[13042], 10.00th=[13698], 20.00th=[15008],

| 30.00th=[16057], 40.00th=[17433], 50.00th=[19792], 60.00th=[22938],

| 70.00th=[25822], 80.00th=[27919], 90.00th=[29754], 95.00th=[31065],

| 99.00th=[35914], 99.50th=[39584], 99.90th=[63177], 99.95th=[69731],

| 99.99th=[86508]

bw ( MiB/s): min= 968, max= 2447, per=99.91%, avg=1504.58, stdev=33.71, samples=1428

iops : min= 7742, max=19574, avg=12032.96, stdev=269.66, samples=1428

lat (usec) : 100=0.01%, 250=0.01%, 500=0.01%, 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%, 4=0.08%, 10=25.17%, 20=49.70%, 50=24.89%

lat (msec) : 100=0.15%

cpu : usr=2.39%, sys=3.58%, ctx=1022869, majf=0, minf=1

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.2%, 16=71.0%, 32=28.8%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=94.1%, 8=4.1%, 16=1.7%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=722806,722753,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

READ: bw=1506MiB/s (1579MB/s), 1506MiB/s-1506MiB/s (1579MB/s-1579MB/s), io=88.2GiB (94.8GB), run=60015-60015msec

WRITE: bw=1506MiB/s (1579MB/s), 1506MiB/s-1506MiB/s (1579MB/s-1579MB/s), io=88.3GiB (94.8GB), run=60015-60015msec

DD (cd /mnt/pool/test && dd if=/dev/zero of=file bs=1048576):

Which is 657MB/s for about 100GB in 154 seconds.96702+0 records in

96701+0 records out

101398347776 bytes transferred in 154.331941 secs (657014662 bytes/sec)

Windows file transfer:

To show the copy isn't limited by the reads on the client machine:

Windows Local File copy:

Thank you in advance for any help you're able to provide.

Thanks

H