Jason Brunk

Dabbler

- Joined

- Jan 1, 2016

- Messages

- 28

yeah I don't care what the topic is.. you have 8000+ messages on anything, you know your #$%^ lol

It's entirely possible that something else is causing your network drops, but it's important to eliminate the obvious before exploring more exotic possibilities.I have never had problems till lately with the network dropping.

It's entirely possible that something else is causing your network drops, but it's important to eliminate the obvious before exploring more exotic possibilities.

yeah I don't care what the topic is.. you have 8000+ messages on anything, you know your #$%^ lol

He has A LOT of input on EVERYTHING.

Well, the network dropped this morning even after removing that cache drive the other day. So atleast we know for certain that wasn't the culprit. I did grab a debug right after the network services came back online. Would anyone be willing to take a peek at it?It's entirely possible that something else is causing your network drops, but it's important to eliminate the obvious before exploring more exotic possibilities.

With your opinions of RAIDZ what recommendations would you have now? Different OS? hardware raid?What he's describing is *SO* much like the filer just being overwhelmed and things timing out, I'd say the likelihood of it being something else is remote. There's no harm to setting up an ongoing ping session somewhere to monitor the filer, but with 8GB and L2ARC and iSCSI and RAIDZ, everything I've seen over the year screams "totally inadequate system." I never really appreciated just how bad it was until I had actually tried a whole bunch of combinations, at which point my opinion on RAM roughly doubled and my disdain for RAIDZ grew to a point where I simply wouldn't suggest anyone use it except on massively large systems with large numbers of vdevs, or maybe extremely light usage.

I haven't seen alot of speak of cpu, so I am guessing the big resource hog is the ram. For my (what now is obviously small) install, if I throw more memory at it, it seems the general consensus is that is probably the best way to improve my system.Thanks for the compliment, but I feel compelled to point out that at five years, that only works out to maybe four or five messages a day.

It's more like I sit around and have time to spout crap while waiting for other things I'm doing to finish. :)

Anyways, the sad/bad news is that iSCSI on ZFS tends to consume ungodly amounts of resources in order to perform well, but once you do commit that, it pretty much kicks the crap out of a conventional hard drive based array. There isn't a ton of middle ground, either. If you do it right with ARC and L2ARC on the read side, and keeping a good amount of free space and multiple vdevs on the write side, it moves along very nicely. But as an example, in order to get 7TB of good quality usable space on the VM filer here, I'm looking at a 24-bay system stuffed with 2TB 2.5" laptop-ish hard drives, with 128GB of RAM and 1TB of L2ARC. That's 48TB of HDD, 1TB of flash, and 128GB of RAM to get 7TB of nonsucky HDD storage.

Glad I can help out with the quota :)Thanks for the compliment, but I feel compelled to point out that at five years, that only works out to maybe four or five messages a day.

If you study discussions related to block storage on FreeNAS (of which iSCSI is one example) in these forums, you'll see the same recommendations repeated:With your opinions of RAIDZ what recommendations would you have now? Different OS? hardware raid?

This is the most cost-effective single improvement you can make to any FreeNAS system.if I throw more memory at it, it seems the general consensus is that is probably the best way to improve my system.

Robert,If you study discussions related to block storage on FreeNAS (of which iSCSI is one example) in these forums, you'll see the same recommendations repeated:

For #2, you use striped mirrors. The others should be self-explanatory.

- Server grade hardware = necessary.

- More vdevs = better (higher IOPS).

- More RAM = better.

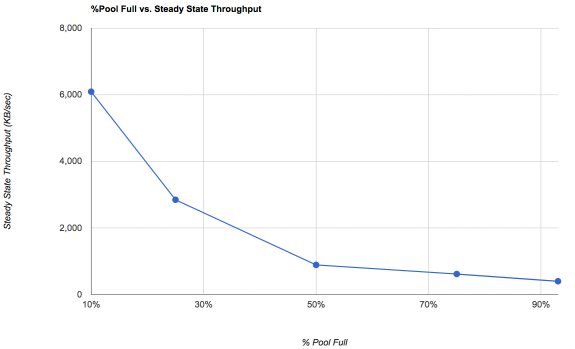

- More capacity = better (don't use more than 50% of pool capacity).

This is the most cost-effective single improvement you can make to any FreeNAS system.

Mirrors for iSCSI not raidz, unless you have an all flash pool.Robert,

1. i have server hardware (obviously not nearly enough lol)

2. i am pretty sure i have stripped raidz, is there a better config?

3. this is becoming PAINFULLY obvious :)

Thanks for the feedback :)

ah, I see.Mirrors for iSCSI not raidz, unless you have an all flash pool.

They're Intel, which is a preferred brand. I can't comment on whether the specific chipsets (i210 + i217LM) have issues.figured the 2 onboard intel nics would work

So far I tried one on board, then switched to the other. Next step will be to try my pci card and see if it goes away. I have also been reading ALOT of other forums and guides and think a boost in memory is going to be coming out of the tax return :)They're Intel, which is a preferred brand. I can't comment on whether the specific chipsets (i210 + i217LM) have issues.

Well, the network dropped this morning even after removing that cache drive the other day. So atleast we know for certain that wasn't the culprit.

With your opinions of RAIDZ what recommendations would you have now? Different OS? hardware raid?

Looks like in addition to the memory I will be doing some house cleaning as well to free up more space. :)

WARNING: 10.250.101.32 (iqn.1998-01.com.vmware:esxi02-59532b10): no ping reply (NOP-Out) after 5 seconds; dropping connection

ix0: <Intel(R) PRO/10GbE PCI-Express Network Driver, Version - 3.1.13-k> port 0xe880-0xe89f mem 0xf9e80000-0xf9efffff,0xf9f78000-0xf9f7bfff irq 30 at device 0.0 on pci8