While TrueNAS 12.0 (CORE and Enterprise editions) continues its release march, we’re also busy getting the first version of TrueNAS SCALE into the hands of many tech-savvy users. TrueNAS SCALE 20.10-ALPHA is planned for October and will be codenamed “Angelfish”.

As our initial community post on SCALE indicated, TrueNAS SCALE is defined by its acronym:

TrueNAS starts from the TrueNAS 12.0 base which includes OpenZFS, all the storage services, the middleware to coordinate these, and the web UI to present a user-oriented view of the system. This base has been tested by hundreds of thousands of users over the last few years.

The good news is that nearly all of this base has been preserved with relatively small software changes. For Enterprise users, it has also been possible to port over the High Availability (HA) software, enclosure management, and other Enterprise features. This means that SCALE will be able to run on TrueNAS M-Series and X-Series systems in the future and take advantage of the redundancy.

Being similar to TrueNAS 12.0 is awesome because it means it will be a similar UX, which minimizes the training necessary to get up-to-speed on TrueNAS SCALE, but it’s what you can do with TrueNAS SCALE that’s most exciting. The new capabilities being added define the new opportunities for SCALE:

- KVM Virtualization: Mature Hypervisor with good reliability, Guest OS support, and enterprise features.

- Kubernetes: Applications can be single (docker) containers or pods of containers.

- Scale-out ZFS: SCALE will enable datasets to be defined as ZFS datasets or cluster datasets which span multiple nodes and ZFS pools. Cluster datasets will have a variety of redundancy properties and still support ZFS snapshots.

Unlike other Hyperconverged Infrastructure solutions, TrueNAS SCALE will have deployment benefits as a single node, an HA system, or as a cluster of multiple nodes. Start with a single node system and in the future, you will be able to scale-out.

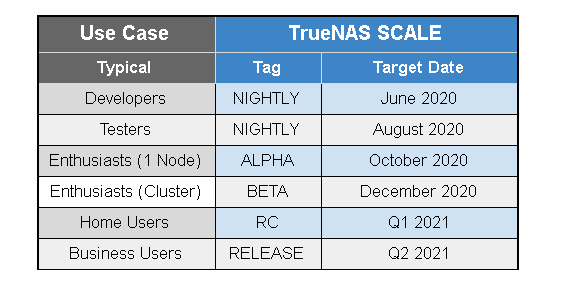

Given the amount of existing software and new software, we have a release plan that lets the community confidently test and deploy SCALE as it becomes available. The high level plan follows this process.

“ANGELFISH”

Release numbering will be based on Year and Month. The first numbered release will come out in October and will be called TrueNAS SCALE 20.10 (Angelfish). The codenames will be alphabetically sequential and will be associated with aquatic animals that have scales or swim in schools (clusters).

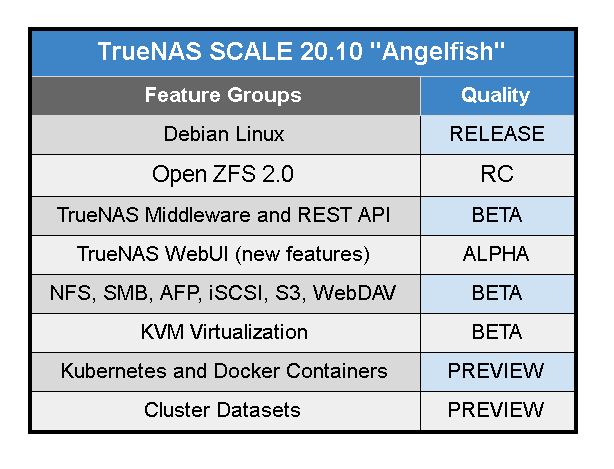

The focus is on characterizing “feature groups” as either PREVIEW, ALPHA, BETA, RC, or RELEASE quality. Users should read the release notes to confirm support for their particular use case. Angelfish is almost feature complete in the NIGHTLY releases and includes the following feature groups: It should be noted that KVM has little testing by this community but is widely used elsewhere. Kubernetes will also be based on stable, released code, but the WebUI and Middleware are expected to be PREVIEW quality.

It should be noted that KVM has little testing by this community but is widely used elsewhere. Kubernetes will also be based on stable, released code, but the WebUI and Middleware are expected to be PREVIEW quality.

Clustered datasets require some additional TrueCommand features (expected in November) to provide an easy-to-use WebUI. In the meantime, the CLI and APIs can be tested and this feature group is classified as PREVIEW status.

We appreciate the community feedback and bug reports and hope to get all those features to RELEASE quality faster.

Is TrueNAS SCALE for Users or Developers?

Right now, TrueNAS SCALE is for developers and bug hunters and can be downloaded here. For Linux developers, there are many opportunities to contribute to the Open Source TrueNAS SCALE project. We have made it a very well coordinated and managed environment to develop the best Open Hyperconverged Infrastructure. For more information, see this Community post.

The TrueNAS SCALE Angelfish releases in Q4 will be good for tech-savvy enthusiasts and testers. We’ll let you know when TrueNAS SCALE 20.10 is ready.

In 2021, TrueNAS SCALE is expected to get to full RELEASE quality for a clustered system.

If you have any additional questions or need advice on a new project, please email us at info@iXsystems.com. We are standing by to help.