sysadmin97

Cadet

- Joined

- Dec 19, 2021

- Messages

- 3

Hello everyone,

I'm still fairly new to TrueNas and I have a curious problem with my TrueNas Box.

All the testing was done with a striped SSD Pool (one SSD), no compression and a record size of 1 MiB. The problem also persists on my big HDD Pool.

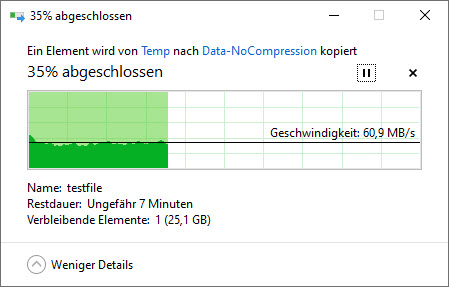

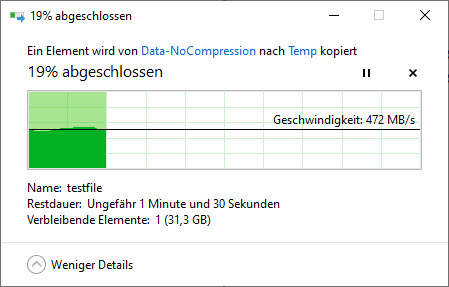

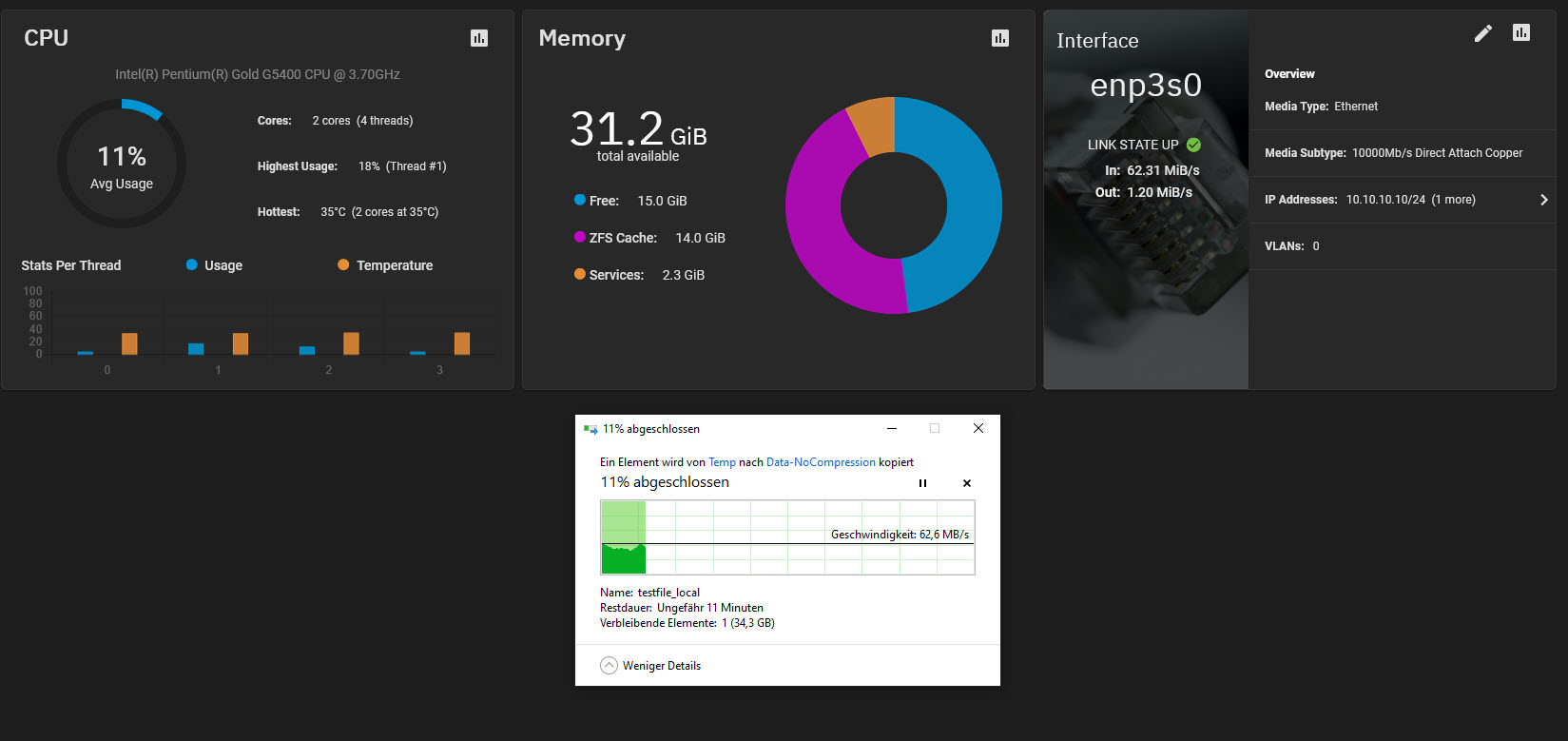

When I'm trying to write to my TrueNAS Box over SMB (or any other protocol) the write performance is absolutely terrible at ~ 60 MB/s. The read performance is much more reasonable at around ~ 470 MB/s. The CPU of my TrueNAS Box never exceeded 30% load during those transfers.

I also tried multiple cables and even a direct connection between my computer and the On-Board 1 Gbit Connection of the TrueNAS System but sadly the results stayed the same.

Windows Copy write over SMB:

Windows Copy read over SMB:

In direct comparison to the internal dd results is there a huge discrepancy in the write speeds:

Write:

Read:

I haven't touched any samba configurations but here is the output of testparm anyway:

I also checked if all the devices were recognized by TrueNas correctly by running the lspci command but as far as I can tell everything looks good on that side:

I really don't know what to think anymore. The performance of the Pool is there but somehow it gets lost as soon as it enters the server. The average usage of the CPU never exceeded 30% so there is still more than enough headroom for compression and so on and there is still over 40% of the RAM available.

Do you guys maybe know what could be a possible reason for that kind of behavior?

Thanks in advance for your help.

I'm still fairly new to TrueNas and I have a curious problem with my TrueNas Box.

Intel(R) Pentium(R) Gold G5400 CPU @ 3.70GHz

PRIME B360-PLUS

32 GB RAM (no ECC)

Broadcom SAS 9300-8i

Intel® 82599ES 10 Gigabit Ethernet Controller

8 x 9.1 TiB Seagate Exos X16 (Raidz2)

1x 167.68 GiB Intel SSD for Testing (Stripe)

PRIME B360-PLUS

32 GB RAM (no ECC)

Broadcom SAS 9300-8i

Intel® 82599ES 10 Gigabit Ethernet Controller

8 x 9.1 TiB Seagate Exos X16 (Raidz2)

1x 167.68 GiB Intel SSD for Testing (Stripe)

All the testing was done with a striped SSD Pool (one SSD), no compression and a record size of 1 MiB. The problem also persists on my big HDD Pool.

When I'm trying to write to my TrueNAS Box over SMB (or any other protocol) the write performance is absolutely terrible at ~ 60 MB/s. The read performance is much more reasonable at around ~ 470 MB/s. The CPU of my TrueNAS Box never exceeded 30% load during those transfers.

I also tried multiple cables and even a direct connection between my computer and the On-Board 1 Gbit Connection of the TrueNAS System but sadly the results stayed the same.

Windows Copy write over SMB:

Windows Copy read over SMB:

In direct comparison to the internal dd results is there a huge discrepancy in the write speeds:

Write:

Code:

root@truenas[/mnt/SSD-Share/Data-NoCompression]# dd if=/dev/zero of=/mnt/SSD-Share/Data-NoCompression/testfile bs=4M count=10000 10000+0 records in 10000+0 records out 41943040000 bytes (42 GB, 39 GiB) copied, 82.5708 s, 508 MB/s

Read:

Code:

root@truenas[/mnt/SSD-Share/Data-NoCompression]# dd of=/dev/zero if=/mnt/SSD-Share/Data-NoCompression/testfile bs=4M count=10000 10000+0 records in 10000+0 records out 41943040000 bytes (42 GB, 39 GiB) copied, 78.1081 s, 537 MB/s

I haven't touched any samba configurations but here is the output of testparm anyway:

Code:

root@truenas[/mnt/SSD-Share/Data-NoCompression]# testparm -s

Load smb config files from /etc/smb4.conf

lpcfg_do_global_parameter: WARNING: The "syslog only" option is deprecated

Loaded services file OK.

Weak crypto is allowed

Server role: ROLE_DOMAIN_MEMBER

# Global parameters

[global]

allow trusted domains = No

bind interfaces only = Yes

client ldap sasl wrapping = seal

disable spoolss = Yes

dns proxy = No

domain master = No

kerberos method = secrets and keytab

load printers = No

local master = No

logging = file

max log size = 5120

preferred master = No

printcap name = /dev/null

realm = HOME.INTERN

registry shares = Yes

restrict anonymous = 2

security = ADS

server min protocol = SMB2

server multi channel support = No

server role = member server

server string = TrueNAS Server

template homedir = /var/empty

template shell = /bin/sh

winbind cache time = 7200

winbind enum groups = Yes

winbind enum users = Yes

winbind max domain connections = 10

workgroup = HOME

idmap config home : sssd_compat = false

idmap config home : backend = rid

idmap config * : range = 90000001 - 100000000

idmap config home : range = 100000001 - 200000000

idmap config * : backend = tdb

create mask = 0775

directory mask = 0775

[Share]

access based share enum = Yes

ea support = No

kernel share modes = No

path = /mnt/TrueNas/Share

posix locking = No

read only = No

smbd max xattr size = 2097152

vfs objects = streams_xattr shadow_copy_zfs acl_xattr zfs_core io_uring

tn:vuid =

fruit:time machine max size = 0

fruit:time machine = False

tn:home = False

tn:path_suffix =

tn:purpose = NO_PRESET

[SSD-Share]

ea support = No

kernel oplocks = Yes

nt acl support = No

path = /mnt/SSD-Share

read only = No

vfs objects = shadow_copy_zfs acl_xattr zfs_core io_uring

tn:vuid =

fruit:time machine max size = 0

tn:path_suffix =

fruit:time machine = False

tn:home = False

tn:purpose = NO_PRESET

I also checked if all the devices were recognized by TrueNas correctly by running the lspci command but as far as I can tell everything looks good on that side:

Code:

root@truenas[/mnt/SSD-Share/Data-NoCompression]# lspci 00:00.0 Host bridge: Intel Corporation Device 3e0f (rev 07) 00:01.0 PCI bridge: Intel Corporation 6th-10th Gen Core Processor PCIe Controller (x16) (rev 07) 00:02.0 VGA compatible controller: Intel Corporation CoffeeLake-S GT1 [UHD Graphics 610] 00:14.0 USB controller: Intel Corporation Cannon Lake PCH USB 3.1 xHCI Host Controller (rev 10) 00:14.2 RAM memory: Intel Corporation Cannon Lake PCH Shared SRAM (rev 10) 00:16.0 Communication controller: Intel Corporation Cannon Lake PCH HECI Controller (rev 10) 00:17.0 SATA controller: Intel Corporation Cannon Lake PCH SATA AHCI Controller (rev 10) 00:1b.0 PCI bridge: Intel Corporation Cannon Lake PCH PCI Express Root Port #21 (rev f0) 00:1c.0 PCI bridge: Intel Corporation Cannon Lake PCH PCI Express Root Port #5 (rev f0) 00:1d.0 PCI bridge: Intel Corporation Cannon Lake PCH PCI Express Root Port #9 (rev f0) 00:1d.2 PCI bridge: Intel Corporation Cannon Lake PCH PCI Express Root Port #11 (rev f0) 00:1d.3 PCI bridge: Intel Corporation Cannon Lake PCH PCI Express Root Port #12 (rev f0) 00:1f.0 ISA bridge: Intel Corporation Device a308 (rev 10) 00:1f.3 Audio device: Intel Corporation Cannon Lake PCH cAVS (rev 10) 00:1f.4 SMBus: Intel Corporation Cannon Lake PCH SMBus Controller (rev 10) 00:1f.5 Serial bus controller [0c80]: Intel Corporation Cannon Lake PCH SPI Controller (rev 10) 01:00.0 Serial Attached SCSI controller: Broadcom / LSI SAS3008 PCI-Express Fusion-MPT SAS-3 (rev 02) 03:00.0 Ethernet controller: Intel Corporation 82599ES 10-Gigabit SFI/SFP+ Network Connection (rev 01) 05:00.0 PCI bridge: ASMedia Technology Inc. ASM1083/1085 PCIe to PCI Bridge (rev 04) 07:00.0 Ethernet controller: Realtek Semiconductor Co., Ltd. RTL8111/8168/8411 PCI Express Gigabit Ethernet Controller (rev 15)

I really don't know what to think anymore. The performance of the Pool is there but somehow it gets lost as soon as it enters the server. The average usage of the CPU never exceeded 30% so there is still more than enough headroom for compression and so on and there is still over 40% of the RAM available.

Do you guys maybe know what could be a possible reason for that kind of behavior?

Thanks in advance for your help.