We have a pool setup of two 800gb Intel 750 PCIe NVMe SSDs (tested with and without Samsung 850 Pro as SLOG).

We are going over 10GBe iSCSI to VMWare 6.0. Guest OS is Server 2012.

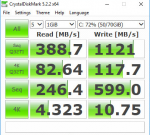

We're seeing some pretty good speeds all around, except with our 4k with a queue depth of 1. We're seeing very low speeds, about 4MB/s read and 10MB/s write. Neither value changes much at all with or without the 850 Pro SLOG.

When testing on a local SSD (just on my desktop) we're seeing more like 20MB/s reads and 82/MBs writes.

This hasn't been an issue until a DB was recently placed on the datastore, and it's taking double or 3x as long to run reports than it was on an old physical spindle server.

I've made some tweaks based on various posts I've found to tunables, with various improvement to other scores but no way to bump up my 4k qd1.

gstat -a shows 10-15% busy times during 4k qd1 read and about 20-25% busy times during write.

Any help would be appreciated, as I know these disks are faster than the speeds we're getting. I've attached a benchmark picture to illustrate the point.

We are going over 10GBe iSCSI to VMWare 6.0. Guest OS is Server 2012.

We're seeing some pretty good speeds all around, except with our 4k with a queue depth of 1. We're seeing very low speeds, about 4MB/s read and 10MB/s write. Neither value changes much at all with or without the 850 Pro SLOG.

When testing on a local SSD (just on my desktop) we're seeing more like 20MB/s reads and 82/MBs writes.

This hasn't been an issue until a DB was recently placed on the datastore, and it's taking double or 3x as long to run reports than it was on an old physical spindle server.

I've made some tweaks based on various posts I've found to tunables, with various improvement to other scores but no way to bump up my 4k qd1.

gstat -a shows 10-15% busy times during 4k qd1 read and about 20-25% busy times during write.

Any help would be appreciated, as I know these disks are faster than the speeds we're getting. I've attached a benchmark picture to illustrate the point.