So for this question, I need to give a bit of background.

BACKGROUND: MY SYSTEM UNDER 11.3:

My pool data is very large, very dedupable files (20TB of VMs), to the point that the extra hardware for dedup was worth it to save HDD costs (3.5 x dedup, I'm saving about 200TB of SAS3 raw disk cost with dedup).

To handle dedup, the structure under 11.3 has been:

I largely fixed the metadata issue by rebuilding the pool and changing its block size/metaslab count (there's a Matt Ahrens paper on that), and also set a tunable to preloading it. But similar options don't existed for DDT. So despite my best efforts, when I write a big file >20GB, gstat showed a periodic flood of 4k DDT reads lasting 30 - 90 seconds a time, easily enough to stall the actual file operation.

Trying to load the relevant parts of a 50GB DDT using 4k IO honestly doesn't work :) Of course that left ARC warm, so rerunning the file operation worked very fast, but that's not an answer. But v12 was coming, so I bided my time.....

PROPOSED SYSTEM CHANGES WITH v12:

Under TrueNAS Core v12 I can offload metadata, spacemaps, and DDT, all to a special vdev chosen to handle 4k IO incredibly well. Also I notice for future that DDT preloading/persistent L2ARC and other things are in the OpenZFS pipeline.

So my v12 rebuild will have mirrored optane 905p 480GB as a special class vdev. With luck, the only remaining problem will be CPU demand of DDT hashing, and that's fixable with a better CPU if a problem. But then I thought......

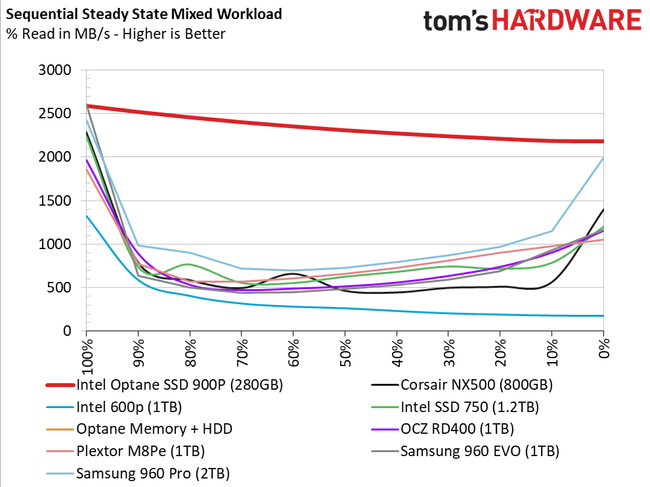

Optane is so good at low queue depth 4k IO and generally, that it leaves me wondering about whether I can leverage this idea even further......

MY QUESTIONS:

These seem straightforward but I'd like to check :)

BACKGROUND: MY SYSTEM UNDER 11.3:

My pool data is very large, very dedupable files (20TB of VMs), to the point that the extra hardware for dedup was worth it to save HDD costs (3.5 x dedup, I'm saving about 200TB of SAS3 raw disk cost with dedup).

To handle dedup, the structure under 11.3 has been:

- 4 core Xeon

- 3 way mirrored enterprise SAS3 for the pool (for speed/resilience)

- 256GB DDR4 @ 2400, to allow caching of the entire DDT and metadata as well as files, with ease. (I set a 140GB ARC reservation on metadata to prevent any eviction)

- 480GB Optane 905p (PCIE 3.0 x 4) L2ARC, added later, to try and improve things.

- P3700 (PCIE) for SLOG/ZIL, although so far its been all FreeBSD+Samba so I havent had much sync IO to making use of it.

I largely fixed the metadata issue by rebuilding the pool and changing its block size/metaslab count (there's a Matt Ahrens paper on that), and also set a tunable to preloading it. But similar options don't existed for DDT. So despite my best efforts, when I write a big file >20GB, gstat showed a periodic flood of 4k DDT reads lasting 30 - 90 seconds a time, easily enough to stall the actual file operation.

Trying to load the relevant parts of a 50GB DDT using 4k IO honestly doesn't work :) Of course that left ARC warm, so rerunning the file operation worked very fast, but that's not an answer. But v12 was coming, so I bided my time.....

PROPOSED SYSTEM CHANGES WITH v12:

Under TrueNAS Core v12 I can offload metadata, spacemaps, and DDT, all to a special vdev chosen to handle 4k IO incredibly well. Also I notice for future that DDT preloading/persistent L2ARC and other things are in the OpenZFS pipeline.

So my v12 rebuild will have mirrored optane 905p 480GB as a special class vdev. With luck, the only remaining problem will be CPU demand of DDT hashing, and that's fixable with a better CPU if a problem. But then I thought......

Optane is so good at low queue depth 4k IO and generally, that it leaves me wondering about whether I can leverage this idea even further......

MY QUESTIONS:

- So, I'm thinking of partitioning both Optanes' nominal 480GB, down to nominal 430GB + 50GB, so instead of 1 x 480GB mirror, ZFS sees a 430GB mirror + 50GB mirror. Redundancy is still good, and a 430GB mirror is still plenty for my foreseeable metadata+DDT needs.

Usually ZFS is recommended to use entire disks, but thats for efficiency purposes. I suspect that won't be an issue, or the gains may outweigh the losses.

As I see it, by moving SLOG to my special vdev devices, ZFS ends up with a mirrored Optane SLOG device that's probably many times faster (latency-wise) than my P3700, even if I allow for it sharing IO with a special metadata vdev, and moving from write only to mixed RW. And now it'll be redundant as well. And have mirrored speed on reads. And I'm not using sync IO much anyway so most of the time it's irrelevant.

Q: Is ditching the P3700 and moving SLOG to a partition on my mirrored Optane special vdev, a total win, or am I missing something?

- I'm also considering whether I need my existing L2ARC any more. After all, it can't possibly be faster than the special vdev anyway, and it only exists to try and speed up metadata/DDT. There's plenty of RAM to cache all metadata and over 100 GB of file data as well. I only got the L2ARC to try and help with metadata/DDT caching/eviction. But with metadata/DDT on mirrored optane anyway, that seems redundant now.

Q: Is there any real benefit to keeping L2ARC given these changes, if I don't feel a need to cache file data beyond what RAM can hold, and if I set a sufficient reservation on ARC metadata to prevent eviction from RAM once loaded?

These seem straightforward but I'd like to check :)