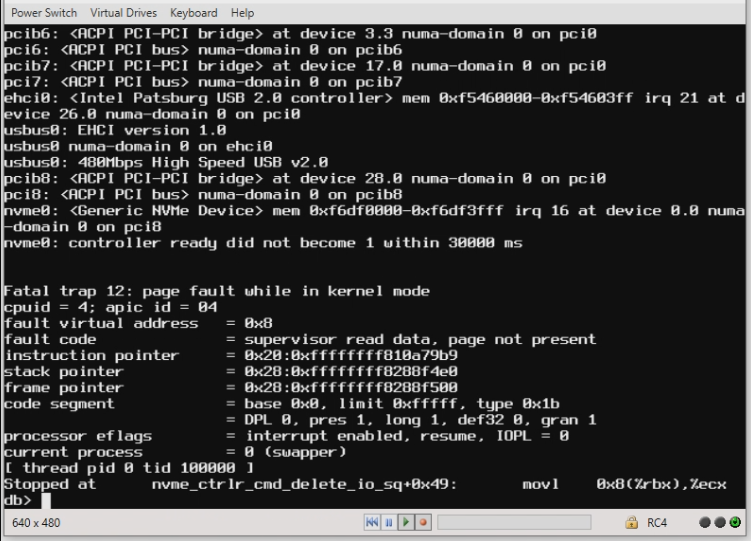

Last Sunday, our Production FreeNAS server (HP DL380e G8, 96GB RAM, boots off tiny mirrored boot M.2 SSD's, main pool is Seagate Exos 10Tb x12 in 4 sets of 3-way mirrors + mirrored 240Gb NVMe w/ power loss protection + ARC2 single 500Gb M.2 SATA Crucial w/ power loss protection) crashed. The symptoms is that it seemingly stops processing iSCSI (Sync=Always, out of paranoia...) traffic for our ESXi hosts. The local console is responsive, but the WebGUI is slow at best until it becomes completely unresponsive. At this point the only option we have is to *try* to reboot via the local console menu and if that doesn't work, press-and-hold the power button... Last Sunday was the 2nd or 3rd time this has happened, with the exception that the server would not reboot afterwards with the following error mentioning the NVMe drives.

Unlike non-booting SATA & SAS drives, NVMe's seem to have a dangerous ability to crash a system on a whim via Kernel Panics, MNI's, and/or BSOD (and the like). For example, I have a brand new WD 500Gb Black NVMe drive, and it crashes a Windows server just trying to format the device, just like it's predecessor 250Gb version did...

I got onsite, powered off the server, and removed both NVMe drives. Afterwards, the system would boot but would NOT mount the main pool. I put the NVMe pair back in and the system DID boot and attach the main pool (maybe the complete power off helped, who knows...):

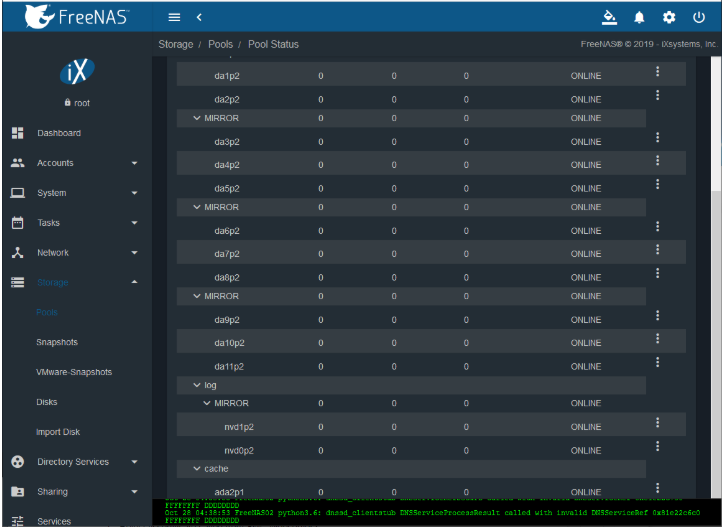

At the time, I thought I'd rather have no SLOG (and take the performance hit) as opposed to have a SLOG that would crash the Production FreeNAS at any given moment (and all the 'political'/business fallout that comes with downtime...). Simply removing them didn't work. So I figured that I needed to remove them from the pool via the webGUI.

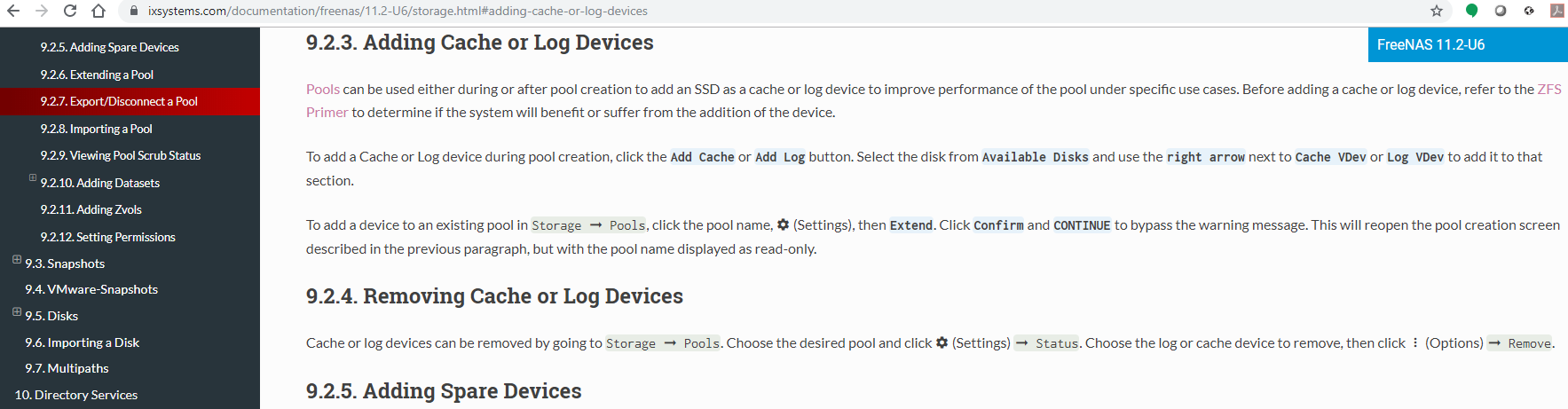

I tried to remove the entire SLOG NVMe pair, but as the screenshot above shows, there's no option to do that. The online manual (https://www.ixsystems.com/documentation/freenas/11.2-U6/storage.html#removing-cache-or-log-devices) says that removing devices from SLOG is supposed to be possible...

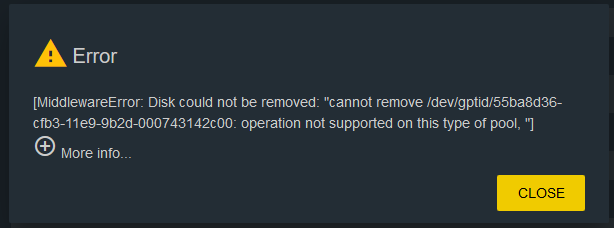

...but when I tried to removed either "nvd1p2" or "nvd0p2", I just got this error saying "Disk could not be removed" & "operation not supported on this type of pool". I tried to Google the error, but found nothing modern. I tried offlining the drive, then removing it, same result.

So FreeNAS won't let me remove the NVMe's, but I can't risk another random crash either. Very weird and concerning especially since the previous FreeNAS (albeit older software, lower hardware, and slightly different config) has been rock-solid for years of straight use. Earlier that day, I received (bought for a completely unrelated purpose) a pair of Kingston 500GB A2000 M.2 2280 Nvme Internal SSD PCIe Up to 2000MB/S with Full Security Suite SA2000M8/500G drives. I know these are not ideal SLOG drives, but I had no choice to use them to replace the previous MyDigitalSSD 240GB (256GB) BPX 80mm (2280) M.2 PCI Express 3.0 x4 (PCIe Gen3 x4) NVMe MLC SSD pair which (allegedly) had power loss protection. So I shut down the server, replaced one old 'MyDigitalSSD' with one new 'Kingston', powered the server on, and thank God FreeNAS booted and mounted the pool (albeit in a degraded state due to missing one of the original NVMe drives). From the WebGUI, I used the 'replace' function to replace the NVMe FreeNAS has listed as missing. FreeNAS resilvered the mirror successfully and the pool was happy/green. So I shutdown the server again, replaced the other old MyDigitalSSD with the other new Kingston, and repeating the replace/resilver process, which worked.

So here I am a few days later. The given FreeNAS server has been working seemingly fine, but I'm not thrilled about having to use the current Kingston NVMe's or the fact that FreeNAS won't let me remove the SLOG at all. My inability to find a modern article referencing this issue leads me to believe my GoogleFu is weak or that this is a very unusual, rare, and/or possibly isolated issue. I'm still trying to figure out my next move (eventually when the next maintenance window comes up...) should be:

Unlike non-booting SATA & SAS drives, NVMe's seem to have a dangerous ability to crash a system on a whim via Kernel Panics, MNI's, and/or BSOD (and the like). For example, I have a brand new WD 500Gb Black NVMe drive, and it crashes a Windows server just trying to format the device, just like it's predecessor 250Gb version did...

I got onsite, powered off the server, and removed both NVMe drives. Afterwards, the system would boot but would NOT mount the main pool. I put the NVMe pair back in and the system DID boot and attach the main pool (maybe the complete power off helped, who knows...):

At the time, I thought I'd rather have no SLOG (and take the performance hit) as opposed to have a SLOG that would crash the Production FreeNAS at any given moment (and all the 'political'/business fallout that comes with downtime...). Simply removing them didn't work. So I figured that I needed to remove them from the pool via the webGUI.

I tried to remove the entire SLOG NVMe pair, but as the screenshot above shows, there's no option to do that. The online manual (https://www.ixsystems.com/documentation/freenas/11.2-U6/storage.html#removing-cache-or-log-devices) says that removing devices from SLOG is supposed to be possible...

...but when I tried to removed either "nvd1p2" or "nvd0p2", I just got this error saying "Disk could not be removed" & "operation not supported on this type of pool". I tried to Google the error, but found nothing modern. I tried offlining the drive, then removing it, same result.

So FreeNAS won't let me remove the NVMe's, but I can't risk another random crash either. Very weird and concerning especially since the previous FreeNAS (albeit older software, lower hardware, and slightly different config) has been rock-solid for years of straight use. Earlier that day, I received (bought for a completely unrelated purpose) a pair of Kingston 500GB A2000 M.2 2280 Nvme Internal SSD PCIe Up to 2000MB/S with Full Security Suite SA2000M8/500G drives. I know these are not ideal SLOG drives, but I had no choice to use them to replace the previous MyDigitalSSD 240GB (256GB) BPX 80mm (2280) M.2 PCI Express 3.0 x4 (PCIe Gen3 x4) NVMe MLC SSD pair which (allegedly) had power loss protection. So I shut down the server, replaced one old 'MyDigitalSSD' with one new 'Kingston', powered the server on, and thank God FreeNAS booted and mounted the pool (albeit in a degraded state due to missing one of the original NVMe drives). From the WebGUI, I used the 'replace' function to replace the NVMe FreeNAS has listed as missing. FreeNAS resilvered the mirror successfully and the pool was happy/green. So I shutdown the server again, replaced the other old MyDigitalSSD with the other new Kingston, and repeating the replace/resilver process, which worked.

So here I am a few days later. The given FreeNAS server has been working seemingly fine, but I'm not thrilled about having to use the current Kingston NVMe's or the fact that FreeNAS won't let me remove the SLOG at all. My inability to find a modern article referencing this issue leads me to believe my GoogleFu is weak or that this is a very unusual, rare, and/or possibly isolated issue. I'm still trying to figure out my next move (eventually when the next maintenance window comes up...) should be:

- Should I just leave it as is? (Not liking this option...)

- Should I replace the current Kingston NVMe's with another set of NVMe's despite their crazy high crash risk? (I've been having similar issues with NVMe's even in other/unrelated systems lately)? If so, what's the current *affordable* (ie $50-$200 each) fan-favorite?

- If I go this route, is there a better procedure than 'power off -> replace 1 -> power on -> resilver -> power off -> replace other -> power on -> resilver'?

- Should I look for a HP 663280-B21rear SFF/2.5" drive cage to hang 2 more SFF drives (off backside) to replace the NVMe's?

- Maybe 2 mechanical HDDs (or maybe consumer level SSDs trading speed for endurance...) behind a RAID controller as suggested by jgreco?

An interesting but unorthodox alternative for SLOG is to use a RAID controller with battery backed write cache, along with conventional hard disks. Normally RAID controllers are frowned upon with ZFS, but here is an opportunity to take advantage of the capabilities: Since the cache absorbs sync writes and writes them as the disk allows, rotational latency becomes a nonissue, and you gain a SLOG device that can operate at the speed the drives are capable of writing at. In the case of a LSI 2208 with 1GB cache, and a pair of slowish ~50MB/sec 2.5" hard drives, it was interesting to note that a burst of ZIL writes could be absorbed by the cache at lightning speed, and then ZIL writes would slow down to the 50MB/sec that the drives were capable of sustaining. With the nearly unlimited endurance of battery-backed RAM and conventional hard drives, this is a very promising technique. - Maybe 2 SSD's w/ power protection, like low level enterprise SSD's or Crucial MX500 Consumer level SSDs which are known for their "Currently unreadable (pending) sectors" issue... If this route, what's the current *affordable* (ie $50-$200 each) fan-favorite SFF SSD?

- Maybe 2 mechanical HDDs (or maybe consumer level SSDs trading speed for endurance...) behind a RAID controller as suggested by jgreco?